VMware Hybrid Cloud Extension (HCX) enables organisations to bridge On-premise and cloud environments to protect and migrate workloads with ease. HCX has been around in some form for a long time and gone through multiple different versions and enhancements. With the uptake of VMware on AWS growing by the minute more and more customers are using HCX to provide infrastructure hybridity.

One of the key learnings that I came across when digging into HCX is that it supports source environments all the way back to 5.0 as well as none VMware platforms (OS Assisted Migration using Sentinel software) which are fantastic when you have ageing hardware and plan to move to the cloud.

Some of the questions i get asked when we talk about HCX and VMware cloud on AWS is why? there are a few key items that normally come up, “Why would I move to a cloud hyperscaler cloud provider and run VMware” the easy answer is around having to refactor to the native format of the cloud EC2, ARM or Compute Engine which can add and extra level of complexity to a migration and also makes it hard to move back on-premise or to another cloud provider at a later stage, in my view having a standard format across my clouds makes sense and providers the ability to move workloads where I want them to have that multi cloud story.

HCX can be deployed in multiple different configurations between environments and can suit most typologies. HCX is also used for many different approaches – Datacentre extension, Cloud extension, Disaster Recovery/Avoidance , Datacentre evacuation.

The below HCX core components need to be deployed before you are able to start moving or protecting workloads.

![]() HCX Connector/Cloud

HCX Connector/Cloud

HCX Connector/Cloud In previous versions this was called the HCX manager and is still called this in some documentation, a HCX connector is normally the On-Premise appliance on where the workloads will be migrated from. The HCX Cloud is the corresponding appliance in the cloud or at the destination location. These are the management level appliances that i used to connect ti the virtual center and perform configuration tasks (if not using the vSphere UI plugin).

Service Mesh – At this point, there is a need to talk about Service Mesh, this construct consists of the remaining 3 required appliances.

A Service Mesh specifies a local and remote pair. When a Service Mesh is created, the HCX Service appliances are deployed on both the source and destination sites and automatically configured by HCX to create the secure optimized transport fabric. This construct provides the ability to manage, update and configure the system in a controlled manner.

![]() HCX Hybrid Interconnect

HCX Hybrid Interconnect

HCX Hybrid Interconnect is responsible for migration and cross-cloud vMotion capabilities at the target site while providing strong encryption, traffic engineering and virtual machine mobility

![]() HCX WAN Optimization

HCX WAN Optimization

HCX WAN Optimization provides de-duplication, compression to enhance the network performance when copying data

![]() HCX Network Extension

HCX Network Extension

HCX Network Extension appliance enables you to extend a layer 2 network/s from the source site to the remote site, the subnet gateway stays at the source site but by doing this enables you to move workloads to the remote location and retain the IP and mac details of the virtual machine.

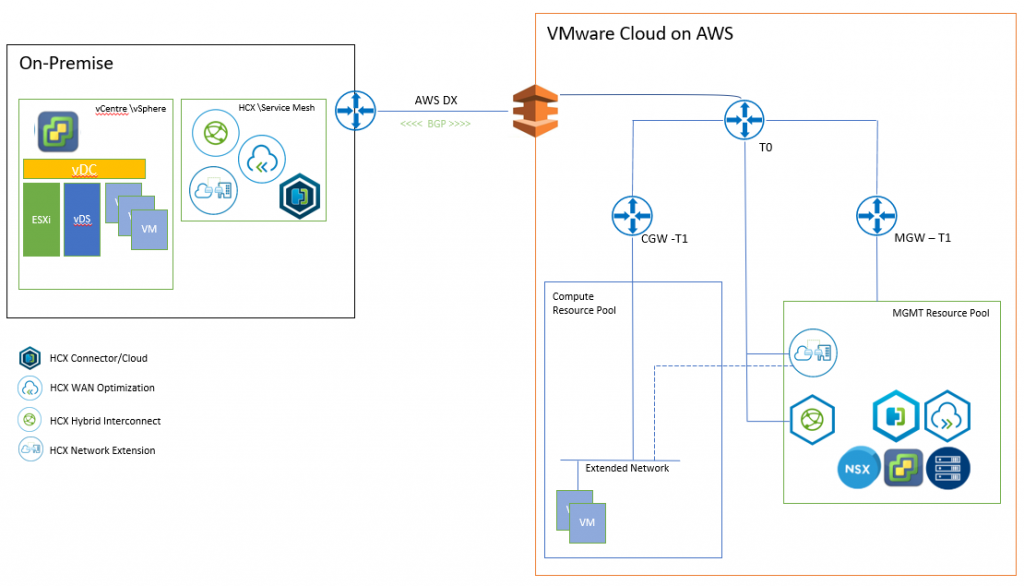

The below diagram shows an On-premise deployment connected to a VMware Cloud on AWS remote site

Once the initial configuration of the HCX managers has been completed

- Activated

- Connected to a Virtual Center Server

- Paired with other HCX managers

It time to deploy Service Mesh, before this can be instantiated compute and network profiles are required, these are used to configure the Service Mesh appliances and the communication paths.

Network Profiles

Management Network Used by the appliances for core service like DNS, NTP and to communicate to other infrastructure components like HCX Manager, Virtual Center, NSX, ESXi hosts …etc

vMotion Network Used for vMotion traffic, commonly uses the ESXi vMotion network

vSphere Replication Network This is used by the interconnect appliances for vSphere replication traffic

Uplink Network used for inter-cloud communication e.g. WAN

Network profiles can be used with Distributed(vDS)/Standard (vSS) Switch portgroup or a NSX Logical Segments.

Note: If you want to extend the network across sites using the Network Extension feature in HCX you cannot use vSS

Compute Profile: This profile contains the compute, storage, and network config that will be used for the Service Mesh deployment. this also contains the HCX services that are enabled, this is dependent on the licence you have activated in the HCX Connector (Advanced/Enterprise). Enterprise has extra features like Replication Assisted vMotion, OS Assisted Migration (KVM/Hyper-V), Mobility Groups, and VMware Site Recovery Manager integration.

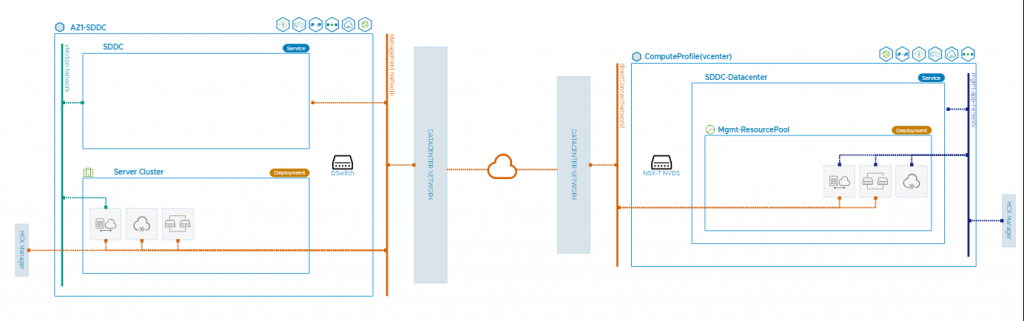

Once these are configured you will be able to deploy a Service Mesh, to do this use the service Mesh wizard in the interconnect tab > Multi-Site Service Mesh > Service Mesh provide the required configurations e.g. compute profile, network profiles, once completed you will be presented with a service mesh topology as the below.

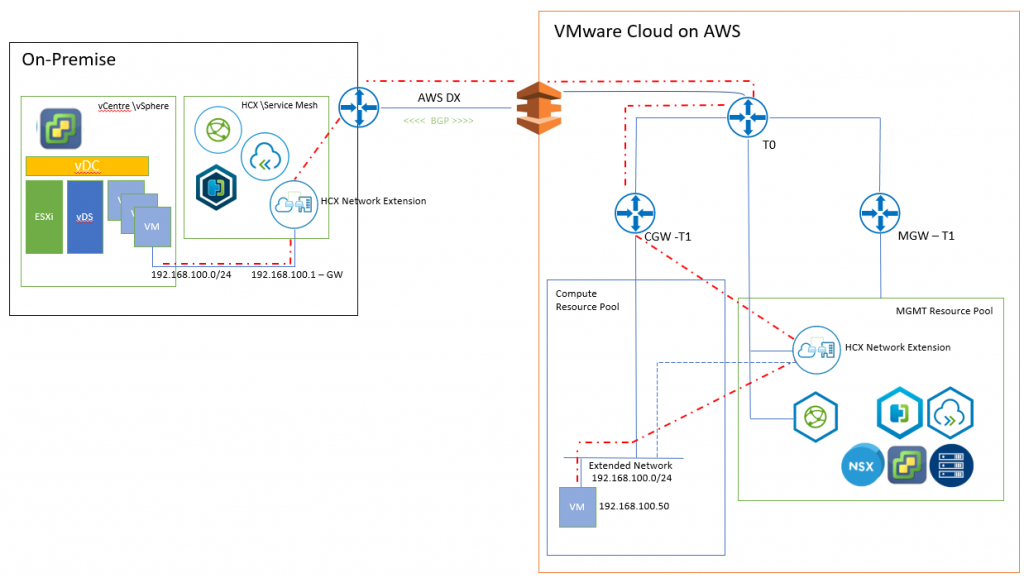

My favourite part of Service Mesh is the Network Extension feature, this allows you to extend your local on-premise layer 2 network into the cloud. This means that when migrating workloads from the source site to the cloud you do not need to re IP the server or make major configuration changes. This is achieved by the layer 2 gateway remaining at the source site and the Network Extension appliances handling the routing and the traffic from the remote site back to the source site. Depending on the deployment type you can also use proximity routing to route the traffic within the site if the source and destination are they stopping the traffic having to traverse the WAN and back, currently this is only for NSX-V at the time of writing this VMware on AWS (NSX-T) does not support this feature.

Ok so now we have the Service MESH deployed and network extension configured let’s move a server.

There are a couple of options on how to attack the migration depending on your requirements and as mentioned above depending of your licences and available feature set.

Migration Options:

HCX Bulk Migration uses the inbuilt host replication technology to copy the data to the remote site. As the name suggests this is designed for moving multiple servers at once which can be scheduled or run immediately. The source server will be shutdown when the migration is ready and the remote copy will be powered on. This will also rename the and disconnect the network at the source. This is great for out of hours bulk parallel workload migrations.

HCX vMotion uses the same technology in Virtual Center vMotion does, this can only work on a single VM at a time but provides a minimal downtime (couple of ping drops) method to migrate.

HCX Replication Assisted vMotion (RAV) is a mixture of Bulk migration and vMotion this setups up a replication for a virtual machine\s and then when it ready to migrate instead of turning off the VM like a normal Bulk migration it starts a vMotion. This means you can pre-stage your workloads and schedule these out of hours for execution with zero downtime. Very Cool!!! Note this requires Enterprise licensing.

HCX OS Assisted Migration uses the sentinel agent which is installed in the VM which establishes a replication connection to the vSphere remote site. This method is only really used when coming from a none vsphere platform. This also requires a Enterprise license and the deployment of the HCX Sentinel Gateway appliance and HCX Data Receiver .